The modern enterprise data landscape resembles a sprawling metropolis with information flowing through countless systems, applications, and storage repositories. This complex ecosystem, while rich with potential insights, often operates in silos, creating significant challenges for organizations striving to become truly data-driven. The traditional approach of moving data to centralized warehouses or lakes has proven increasingly inadequate, often creating more complexity than it resolves. Data Fabric has emerged as a transformative architectural approach, promising not just to connect these disparate data sources but to weave them into a cohesive, intelligent, and actionable whole.

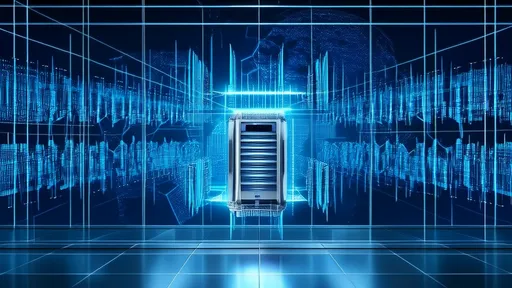

At its core, Data Fabric is a unified data management framework designed to facilitate seamless access and data sharing in a distributed data environment without requiring physical relocation. Unlike monolithic data platforms, a Data Fabric is a dynamic, flexible architecture that sits as a virtual layer on top of existing data infrastructure. It leverages continuous analytics over existing, discoverable, and inferenced metadata assets to support the design, deployment, and utilization of integrated and reusable data across all environments, including hybrid and multi-cloud setups. The ultimate goal is to provide the right data, to the right user, at the right time, and in the right format, all while ensuring robust governance and security.

The power of a Data Fabric lies in its ability to create a logical unification of data. Instead of undertaking the massive and often disruptive project of physically consolidating petabytes of information into a single repository, the fabric creates a virtualized access layer. This means an analyst in marketing can query customer information residing in an on-premise CRM system, a cloud-based data lake, and a real-time streaming service as if it were all in one single database. The fabric handles the immense complexity of connecting to these diverse sources, understanding their schemas, and translating queries into the appropriate languages—all in real-time. This abstraction is the key to unlocking data's potential without the prohibitive costs and risks of large-scale migration projects.

A critical enabler of this seamless connectivity is the sophisticated use of active metadata. Traditional, passive metadata is akin to a library's card catalog—a static list of what exists and where. A Data Fabric supercharges this concept by employing active, continuously analyzed metadata. It uses machine learning and knowledge graphs to not only catalog data assets but also to understand their relationships, quality, usage patterns, and lineage. This intelligence allows the fabric to make recommendations, automate data integration tasks, and even enforce governance policies proactively. For instance, it can automatically tag data containing personal identifiable information (PII) and apply the correct masking policies regardless of which system the data originates from.

For the modern enterprise operating in a multi-cloud world, the Data Fabric architecture is particularly potent. Companies are no longer tied to a single cloud provider; they leverage the best services from AWS, Azure, Google Cloud, and others, while often maintaining critical systems on-premise. This creates a highly distributed data estate that can be a nightmare to manage. A well-implemented Data Fabric acts as the universal translator and connector for this environment. It provides a consistent framework for data governance, security, and access control that spans across these different domains, effectively future-proofing the organization's data strategy against further technological shifts and vendor changes.

The implications for data governance and compliance are profound. In an era of stringent regulations like GDPR and CCPA, knowing where your data is, who is using it, and for what purpose is non-negotiable. The active metadata and knowledge graphs within a Data Fabric provide an unprecedented level of visibility and control. Data lineage is no longer a manual, error-prone process but an automated, real-time map of data movement and transformation. Security policies can be defined once and applied universally across the entire data landscape. This transforms governance from a bureaucratic hurdle into an integrated, automated, and value-added function that builds trust in data and accelerates its consumption.

Perhaps the most tangible benefit for business users is the radical acceleration of time-to-insight. Data scientists and analysts can spend up to 80% of their time simply finding, cleaning, and preparing data for analysis. A Data Fabric dramatically reduces this "data prep" overhead by providing a self-service data marketplace. Business users can search for data assets using natural language, understand their quality and provenance instantly, and access them through standardized APIs or tools like SQL without needing deep technical knowledge of the underlying systems. This empowers a truly data-driven culture where innovation is accelerated, and decisions are based on a comprehensive view of the enterprise.

Implementing a Data Fabric is not merely a technological installation; it is a strategic journey that requires a shift in both technology and mindset. It begins with a clear assessment of the current data landscape and a definition of key use cases that will deliver immediate business value. Success hinges on strong data governance principles established from the outset and cross-organizational collaboration between IT, data engineering, and business units. The architecture should be built incrementally, starting with a focused domain, proving its value, and then expanding its reach across the enterprise. Choosing the right technology partners and platforms that support open standards is crucial to avoid vendor lock-in and ensure long-term flexibility.

In conclusion, as data volumes explode and architectures become more distributed and complex, the traditional centralized paradigm is breaking down. Data Fabric represents the next evolution in data management—a responsive, intelligent, and unified layer that connects the disparate threads of the enterprise data landscape. It is the foundational architecture that enables organizations to overcome data silos, automate governance, and finally deliver on the long-promised goal of seamless data access. For any enterprise aiming to thrive in the digital age, weaving a robust Data Fabric is no longer a luxury but an imperative strategic necessity.

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025